OpenChatKit 环境搭建

创始人

2025-05-28 01:58:07

0次

事先安装过 cuda 11.8 cudnn 8.6 和 TensorRT

安装miniconda

下载源代码:

$ git clone --recursive https://github.com/togethercomputer/OpenChatKit.git安装miniconda:

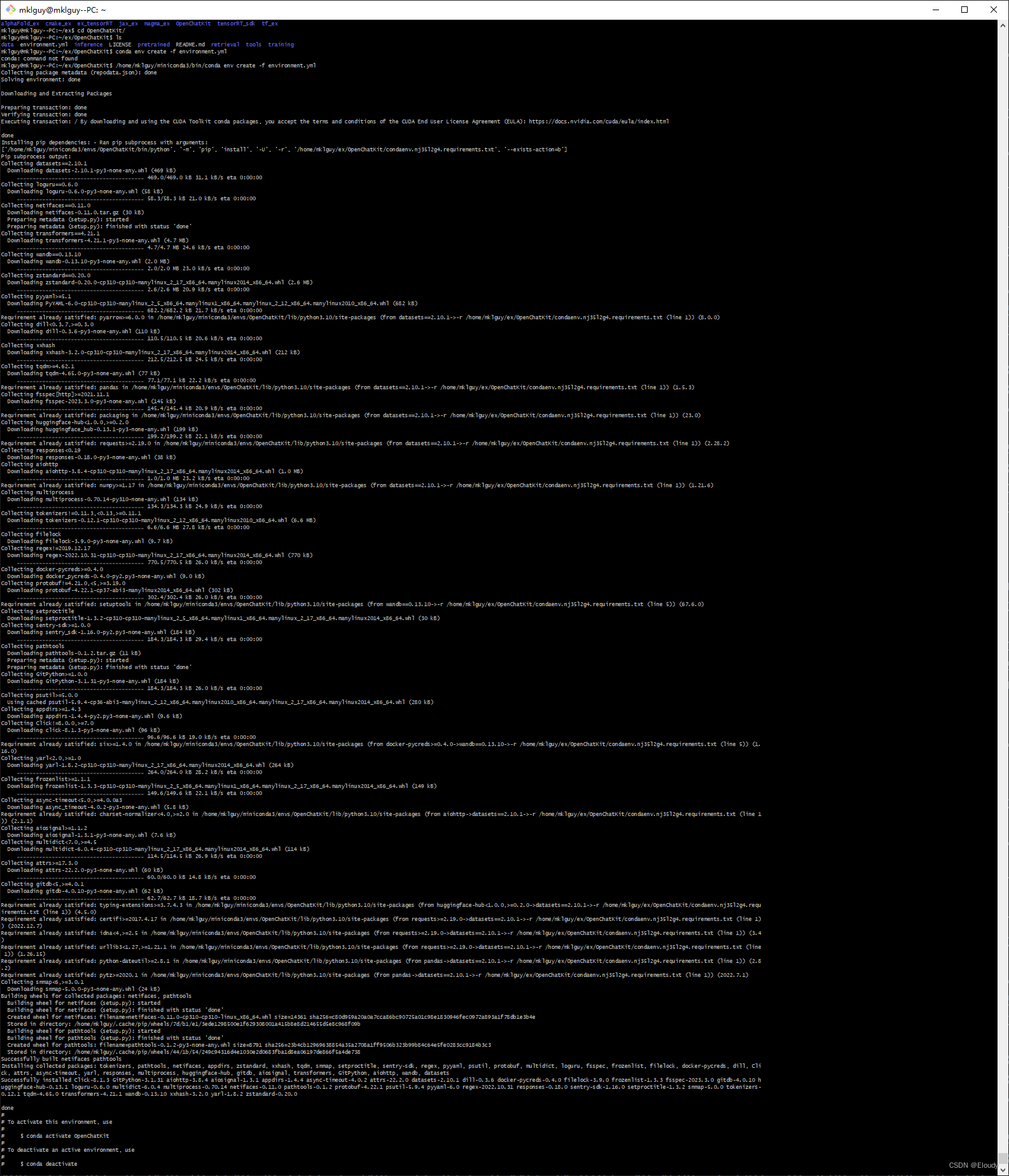

$ wget https://repo.anaconda.com/miniconda/Miniconda3-latest-Linux-x86_64.sh$ sh ./Miniconda3-latest-Linux-x86_64.sh$ /home/mklguy/miniconda3/bin/conda initmklguy@mklguy--PC:~/ex/OpenChatKit$ /home/mklguy/miniconda3/bin/conda env create -f environment.yml在V-P-N的挟持下,创建成功:

在~/.bashrc中会出现这么一段,导致每次进入系统后,会先进入conda环境:

# >>> conda initialize >>>

# !! Contents within this block are managed by 'conda init' !!

__conda_setup="$('/home/mklguy/miniconda3/bin/conda' 'shell.bash' 'hook' 2> /dev/null)"

if [ $? -eq 0 ]; theneval "$__conda_setup"

elseif [ -f "/home/mklguy/miniconda3/etc/profile.d/conda.sh" ]; then. "/home/mklguy/miniconda3/etc/profile.d/conda.sh"elseexport PATH="/home/mklguy/miniconda3/bin:$PATH"fi

fi

unset __conda_setup

# <<< conda initialize <<<备份文件后删掉这段试试。

$ source .bashrc也可以使用 pip3再安装整个系统可用的 pytorch:

$ pip3 install --pre torch torchvision torchaudio --index-url https://download.pytorch.org/whl/nightly/cu118用经典案例做一个测试:

from __future__ import print_functionimport torch

import torch.nn as nn

import torch.nn.functional as F

import torch.optim as optim

from torchvision import datasets, transforms

from torch.optim.lr_scheduler import StepLR

import argparseclass Net(nn.Module):def __init__(self):super(Net, self).__init__()self.conv1 = nn.Conv2d(1, 32, 3, 1)self.conv2 = nn.Conv2d(32, 64, 3, 1)self.dropout1 = nn.Dropout(0.25)self.dropout2 = nn.Dropout(0.5)self.fc1 = nn.Linear(9216, 128)self.fc2 = nn.Linear(128, 10)def forward(self, x):x = self.conv1(x)x = F.relu(x)x = self.conv2(x)x = F.relu(x)x = F.max_pool2d(x, 2)x = self.dropout1(x)x = torch.flatten(x, 1)x = self.fc1(x)x = F.relu(x)x = self.dropout2(x)x = self.fc2(x)output = F.log_softmax(x, dim=1)return outputdef train(args, model, device, train_loader, optimizer, epoch):model.train()for batch_idx, (data, target) in enumerate(train_loader):data, target = data.to(device), target.to(device)optimizer.zero_grad()output = model(data)loss = F.nll_loss(output, target)loss.backward()optimizer.step()if batch_idx % args.log_interval == 0:print('Train Epoch: {} [{}/{} ({:.0f}%)]\tLoss: {:.6f}'.format(epoch, batch_idx * len(data), len(train_loader.dataset),100. * batch_idx / len(train_loader), loss.item()))if args.dry_run:breakdef test(model, device, test_loader):model.eval()test_loss = 0correct = 0with torch.no_grad():for data, target in test_loader:data, target = data.to(device), target.to(device)output = model(data)test_loss += F.nll_loss(output, target, reduction='sum').item() # sum up batch losspred = output.argmax(dim=1, keepdim=True) # get the index of the max log-probabilitycorrect += pred.eq(target.view_as(pred)).sum().item()test_loss /= len(test_loader.dataset)print('\nTest set: Average loss: {:.4f}, Accuracy: {}/{} ({:.0f}%)\n'.format(test_loss, correct, len(test_loader.dataset),100. * correct / len(test_loader.dataset)))def main():# Training settingsparser = argparse.ArgumentParser(description='PyTorch MNIST Example')parser.add_argument('--batch-size', type=int, default=64, metavar='N',help='input batch size for training (default: 64)')parser.add_argument('--test-batch-size', type=int, default=1000, metavar='N',help='input batch size for testing (default: 1000)')parser.add_argument('--epochs', type=int, default=14, metavar='N',help='number of epochs to train (default: 14)')parser.add_argument('--lr', type=float, default=1.0, metavar='LR',help='learning rate (default: 1.0)')parser.add_argument('--gamma', type=float, default=0.7, metavar='M',help='Learning rate step gamma (default: 0.7)')parser.add_argument('--no-cuda', action='store_true', default=False,help='disables CUDA training')parser.add_argument('--dry-run', action='store_true', default=False,help='quickly check a single pass')parser.add_argument('--seed', type=int, default=1, metavar='S',help='random seed (default: 1)')parser.add_argument('--log-interval', type=int, default=10, metavar='N',help='how many batches to wait before logging training status')parser.add_argument('--save-model', action='store_true', default=False,help='For Saving the current Model')args = parser.parse_args()use_cuda = not args.no_cuda and torch.cuda.is_available()torch.manual_seed(args.seed)device = torch.device("cuda" if use_cuda else "cpu")train_kwargs = {'batch_size': args.batch_size}test_kwargs = {'batch_size': args.test_batch_size}if use_cuda:cuda_kwargs = {'num_workers': 1,'pin_memory': True,'shuffle': True}train_kwargs.update(cuda_kwargs)test_kwargs.update(cuda_kwargs)transform=transforms.Compose([transforms.ToTensor(),transforms.Normalize((0.1307,), (0.3081,))])dataset1 = datasets.MNIST('../data', train=True, download=True,transform=transform)dataset2 = datasets.MNIST('../data', train=False,transform=transform)train_loader = torch.utils.data.DataLoader(dataset1,**train_kwargs)test_loader = torch.utils.data.DataLoader(dataset2, **test_kwargs)model = Net().to(device)optimizer = optim.Adadelta(model.parameters(), lr=args.lr)scheduler = StepLR(optimizer, step_size=1, gamma=args.gamma)for epoch in range(1, args.epochs + 1):train(args, model, device, train_loader, optimizer, epoch)test(model, device, test_loader)scheduler.step()if args.save_model:torch.save(model.state_dict(), "mnist_cnn.pt")if __name__ == '__main__':main()$ python3 hello_mnist.py99%

安装git 的lfs,

$ git lfs install遇到git版本低的问题,最终如下解决:

$ sudo apt-get autoremove git##:: /bin/sh: msgfmt: command not found

$ sudo apt-get install gettext##:: /bin/sh: 1: asciidoc: not found

$ sudo apt-get install asciidoc##:: /bin/sh: 1: docbook2x-texi: not found

$ sudo apt-get install docbook2X$ sudo apt-get install texinfo perl openjade dh-autoreconf autoconf libcurl4-gnutls-dev libexpat1-dev gettext zlib1g-dev libssl-dev asciidoc xmlto docbook2x$ wget https://www.kernel.org/pub/software/scm/git/git-2.40.0.tar.gz$ make prefix=/usr all doc info ;# as yourself# make prefix=/usr install install-doc install-html install-info ;# as root$ wget https://github.com/git-lfs/git-lfs/releases/download/v3.3.0/git-lfs-linux-amd64-v3.3.0.tar.gz

$ sudo ./install.sh##:: OK了安装 huggingface的 transformers:

$ pip3 install transformers下载参数数据并测试:

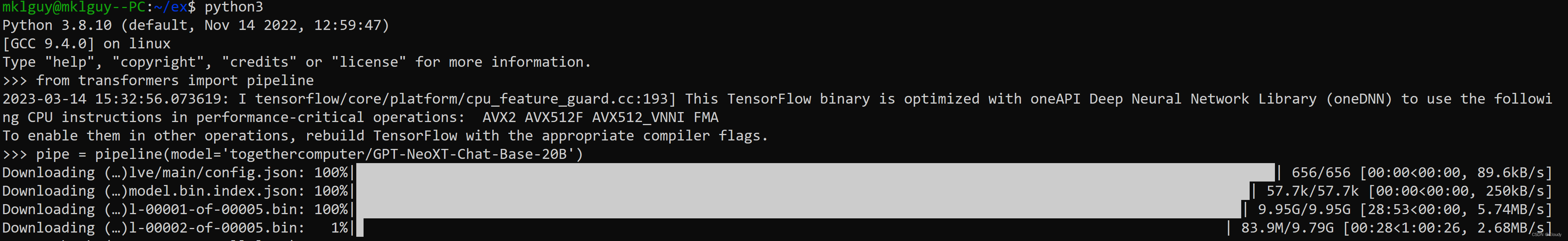

from transformers import pipeline

pipe = pipeline(model='togethercomputer/GPT-NeoXT-Chat-Base-20B')

pipe(''': Hello!\n:''')

上面第二句的执行是这样的:第一波至少下载接近50GB的参数:

相关内容

热门资讯

再度拉升!金价重回4600美元...

黄金价格重回4600美元。 3月25日盘中,伦敦现货黄金价格一度站上4600美元/盎司。截至发稿,伦...

绿营鼓动民进党当局迎合美国派军...

国务院台办25日举行例行新闻发布会,发言人朱凤莲回答记者提问。 有记者问,近期中东局势动荡,美国总统...

私家车主车窗被砸,中国红十字会...

最近,一则“车窗被陌生人砸碎,取走AED救人”的新闻登上了热搜。在山东威海,一位私家车主的车窗被砸,...

中国成功发射中星10R卫星

2月22日消息,北京时间今日20时11分,我国在西昌卫星发射中心使用长征三号乙运载火箭,成功将中星1...

伯克希尔哈撒韦第四季度现金头寸...

2月22日消息,伯克希尔哈撒韦财报显示,2024年第四季度现金头寸升至3,342亿美元;保险浮存金(...

供需失衡氯化钾价格大涨,相关上...

2月22日消息,多方了解到,因短期产品供应不足,需求集中爆发,春节后,国内氯化钾价格一路飞涨,主要产...

泰国总理佩通坦与在泰中资企业对...

2月22日消息,据中国驻泰国大使馆,2月21日,泰国总理佩通坦与在泰中资企业代表在泰国总理府举行对话...

小米将推出首款AI PC产品

2月22日消息,小米集团合伙人兼总裁卢伟冰宣布将推出小米首款AI PC产品。此前小米公司产品行销总监...

原创 2...

22岁的胡心瑶,怎么也没想到,自己人生最狼狈、最无助的一刻,竟然会被陌生人拍摄并定格下来。那是3月2...

男子持原副县长之子名下土地证主...

3月25日,据澎湃新闻,在河南省禹州市人民法院审理的一桩民事主体间房屋拆迁补偿合同诉讼中,原告陈某某...